THE AI LOOP

Hi everyone,

I want to be direct.

For the past seven weeks, this newsletter has focused on AI news. After doing that consistently, I’ve come to a simple conclusion: news is noise.

Most businesses don’t struggle with awareness. They struggle with judgment. The real question isn’t “What happened in AI this week?”, it is “What is actually worth doing?”

My day job is building AI systems that have to work in the real world. That experience has made one thing clear: many GenAI ideas sound compelling in slides, but deliver very little value once they meet real data and real constraints.

So, starting today, The AI Loop is changing.

Instead of headlines, every week I will break down one concrete GenAI use case to help you decide:

Where is the ROI?

What are the failure modes?

Is it actually worth building?

Let's dive into the first one.

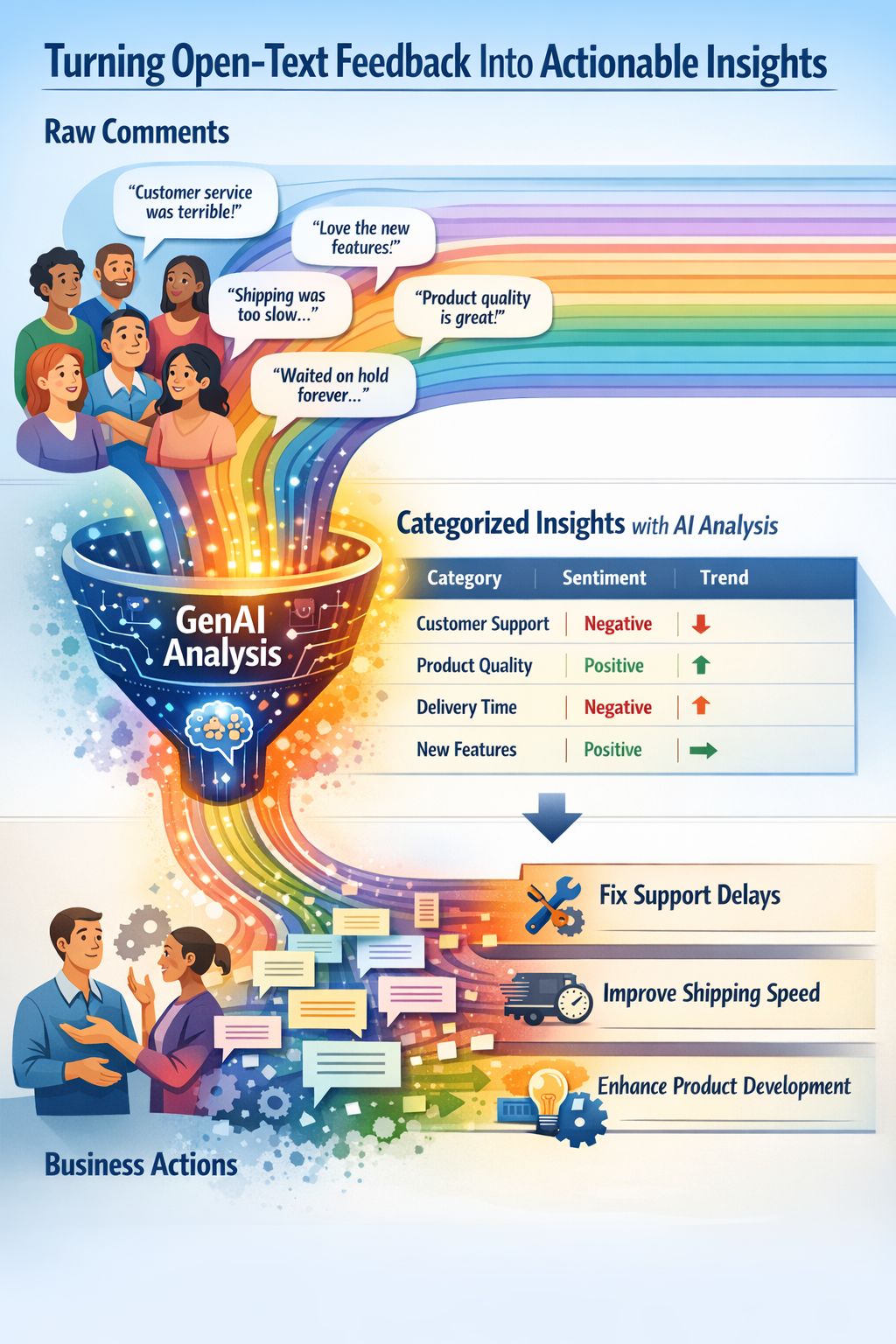

01. The Use Case: Automated Survey Insights

The Concept: Turn open-text survey comments into consistent, business-defined categories so leaders can track themes over time instead of reading thousands of individual comments.

The Transformation:

Input: "Support was slow but the product works well."

GenAI Output:

[Category: Support | Sentiment: Negative]AND[Category: Product Reliability | Sentiment: Positive]

Where the Value Lives

Speed: Time to insight drops from weeks (manual reading) to minutes.

Decision Quality: Moves you from decisions based on anecdotes ("I saw a comment about X") to decisions based on data ("20% of churn drivers are related to X").

Cost: Removes the manual drudgery of tagging recurring surveys.

The Workflow This sits right after survey collection and before your monthly insights readout.

Replaces: Ad-hoc manual reading and "word clouds."

Enables: A repeatable "theme tracker" that links to operational metrics.

⚠️ The Failure Modes (Read this carefully) If you build this, here is where it will break:

Taxonomy Drift: If the AI changes how it defines a category month-over-month, your trend lines are fake. The prompt needs strict constraints.

The Action Gap: If the output is just "interesting data" but isn't owned by a specific department, nothing happens.

False Precision: Executives might treat the sentiment score as an absolute truth. It is a signal, not a fact.

The "Senior Engineer" Verdict Build or Buy? Prototype internally first. The value here depends on your specific category schema, not the tool. Test this with a lightweight script ($5 API cost) before committing to a $50k Enterprise SaaS platform.

The Bottom Line: The upside is real (better decisions, faster cycles), but only if you lock a stable schema and tie outputs to owners. Without that, it’s just a polished dashboard that changes nothing.

Want the code? I am writing a technical breakdown of exactly how to build this pipeline (Python + OpenAI) on my Medium blog later this week. I'll share the link on LinkedIn when it's live.

Question for you: What is one manual process in your business that feels like a waste of human intelligence? Reply and let me know I might break it down in next week's edition.

Until next week,

Asim - The AI Loop